TikTok, Addiction and the EU’s Quiet Philosophical Revolt

There is a comforting myth embedded deep in modern digital culture: that technology is neutral, and what matters is only how we use it. If we scroll too much, that is our weakness. If children stay awake all night glued to a glowing screen, that is poor parenting. Platforms, in this story are mirrors, reflecting our desires, never shaping them.

The European Commission’s preliminary ruling against TikTok quietly dismantles that myth.

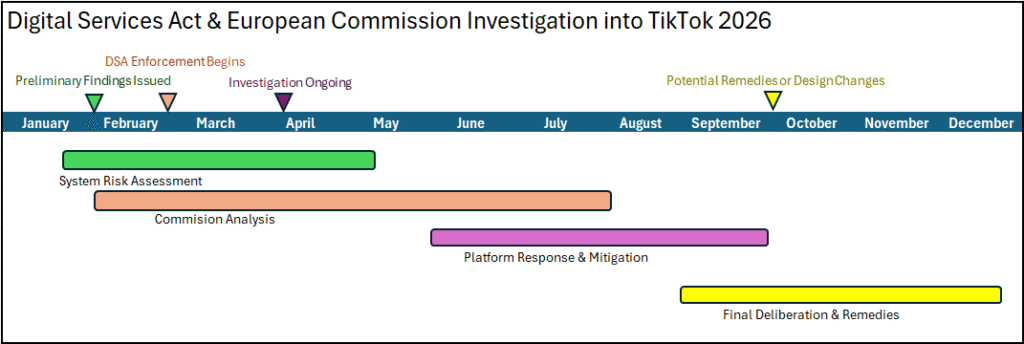

In early February 2026, EU regulators stated that TikTok’s very design, not merely its content, may breach the Digital Services Act (DSA). The charge is not that TikTok hosts harmful material, but that it engineers behaviour. Infinite scroll, algorithmic reward loops, frictionless autoplay: these are not passive features, the Commission argues, but active forces that shift users into what it strikingly called “autopilot mode.” [source 1] [source 2] [source 3]

The Autopilot Problem

To say that an app places the human brain on autopilot is to invoke an old philosophical anxiety with a new vocabulary. Long before smartphones, thinkers worried about habit, compulsion, and the erosion of agency, about the quiet ways repeated actions harden into patterns that no longer feel chosen. What changes with digital platforms is not the nature of this problem, but its scale and precision: never before have systems been able to observe, predict, and steer human attention in real time, minute by minute, scroll by scroll.

TikTok’s design sits squarely in this lineage of concern.

According to the Commission’s findings, the platform continuously rewards users with fresh, highly personalized content, minimizing the cognitive cost of staying engaged while maximizing emotional stimulation. There is no natural stopping point, no closing ritual, no moment where the user must consciously decide to continue. Instead, the app decides for them. This matters because autonomy is not merely the ability to choose, but the ability to notice that one is choosing. [source 1] [source 2] [source 3]

For children and vulnerable adults, the stakes are higher still. The Commission pointed to indicators TikTok allegedly failed to take seriously: prolonged nighttime usage, compulsive patterns, diminished self-control. [source 1] [source 2]

In other words, not accidental overuse, but systematic dependency.

Design as Moral Architecture

We tend to treat digital interfaces as aesthetic or technical problems. The EU is treating them as moral architecture.

Every system nudges behaviour. A staircase invites climbing; a locked door forbids entry. Likewise, an interface invites certain rhythms of life. Infinite scroll invites infinity. Notifications invite interruption. Algorithmic feeds invite surrender.

The Digital Services Act marks a profound shift in legal thinking: platforms are no longer judged only by what they allow, but by what they encourage. Under the DSA, very large online platforms must assess and mitigate “systemic risks,” including risks to mental health, civic discourse, and fundamental rights. [source 1] [source 2] [source 3]

In TikTok’s case, the Commission argues that existing safeguards, screen time tools, parental controls, are insufficient because they are too easy to dismiss or too burdensome to configure. A safeguard that exists only in theory, but collapses under real human behaviour, is not a safeguard at all. [source 1] [source 2]

This reflects a realism about human weakness. The law is no longer assuming a perfectly rational user armed with infinite willpower. It is acknowledging what have been long known: that humans are persuadable, distractible, and deeply responsive to reward. [source]

The Algorithmic Question

At the center of the Commission’s concerns lies TikTok’s recommender system, the opaque mechanism that decides what millions of people see, feel, and linger on each day. Algorithms are often defended as neutral optimizers, simply giving people “what they want.” But desire is not static. It is shaped by repetition, reinforcement, and emotional intensity. To optimize solely for engagement is to optimize for compulsion. [source] [source]

This echoes an older ethical debate: should we judge actions by outcomes alone, or by the means through which those outcomes are achieved? A platform with a billion users may generate joy, creativity, and connection, but if it does so by systematically weakening self-control, is that success or harm?

Children, Time, and the Politics of Attention

Time is one of the most quietly political resources of the digital age. Scientific research shows that using screens, especially in the hours before bedtime, can disrupt children’s sleep by delaying melatonin secretion and altering circadian rhythms, which are crucial for brain development and memory consolidation. Children who use smartphones at night are also more likely to exhibit attentional problems over time, which can negatively affect both subjective and objective measures of school performance. [source 1] [source 2] [source 3]

Sleep and sustained attention are deeply interlinked: inadequate or disrupted sleep due to extended screen exposure has been associated with poorer emotional regulation and behavioral challenges in children and adolescents, according to large systematic reviews of international data. [source 1] [source 2]

These findings help explain why the European Commission’s emphasis on nighttime usage in its assessment of platform design is revealing: it treats time not as a private matter, but as a developmental resource. What children repeatedly attend to, especially late at night, affects their sleep, attention, and emotional well-being.

TikTok’s Rebuttal and the Limits of Denial

TikTok has categorically rejected the Commission’s findings, calling them false and meritless. They argued that user choice, content diversity, and existing wellbeing tools demonstrate that engagement is voluntary rather than coerced. This response was delivered via an official company spokesperson following the publication of the Commission’s preliminary views. The company will have the opportunity to challenge the findings, and the investigation remains ongoing. [source 1] [source 2] [source 3]

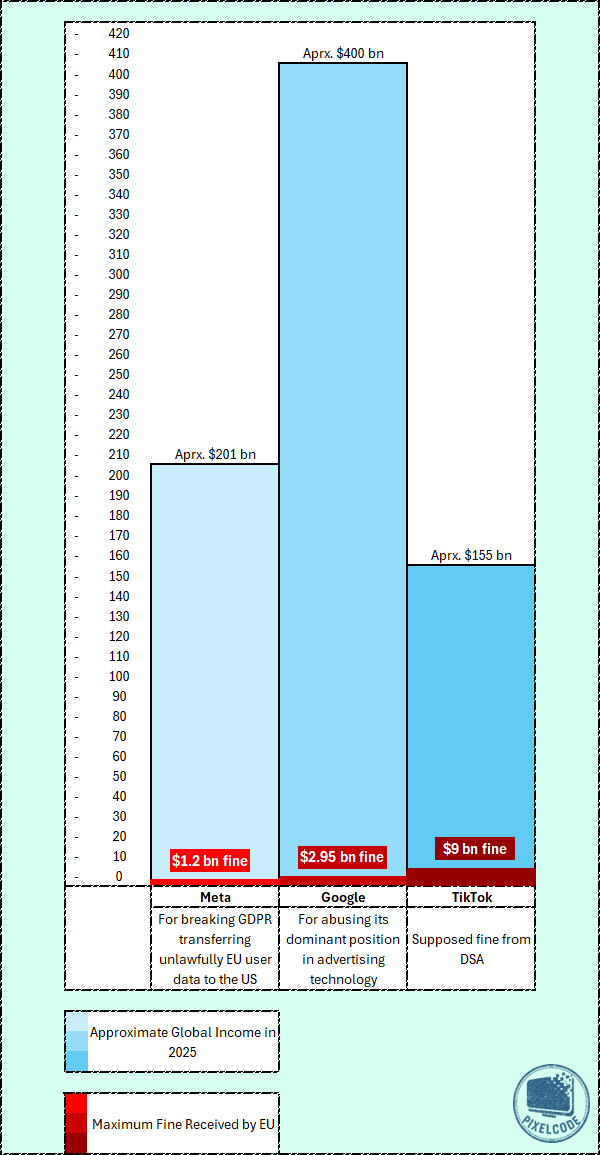

Breaches of the Digital Services Act (DSA) can lead to very serious penalties, including fines of up to 6 % of global turnover and forced redesigns of a platform’s core features. TikTok’s global ad revenue alone is projected to reach around $33 billion in 2025, and the broader business of its parent company, ByteDance, has reported total revenues above $155 billion in 2024, much of which comes from TikTok’s international operations. [source 1] [source 2] [source 3] [source 4]

Under these figures, a 6 % fine applied to annual global turnover in this range could amount to roughly $9 – 10 billion or more, a scale that would make compliance with the DSA a major economic incentive, not just a regulatory threat. [source 1] [source 2]

What stands out in this comparison is the scale of the penalty relative to company size. Meta and Google, whose global revenues are several times larger than TikTok’s, were fined fixed sums under previous EU enforcement actions that represented only a small fraction of their annual income. Even record-breaking penalties of more than one or two billion euros amounted to a limited economic impact for companies generating hundreds of billions in yearly revenue. By contrast, the Digital Services Act introduces a percentage-based fine tied directly to global turnover. Applied to a platform like TikTok, this approach could result in a penalty that is proportionally far heavier than those imposed on much larger companies in the past. The shift signals a move away from symbolic fines toward sanctions designed to materially affect business decisions, regardless of a company’s overall scale.

The European Union’s posture rejects what many call technological fatalism, the idea that whatever is technically possible and economically successful must also be socially acceptable. Instead, regulators are asserting that some platform designs are too manipulative, too asymmetrical, and too corrosive to user autonomy to be left unchecked.

This shift away from the fantasy of the perfectly rational user has not only emerged in law and behavioral science, but has also been explored in contemporary philosophy. The assumption that technologies are neutral tools is repeatedly challenged. Tools do not merely wait passively for human intention; they reorganize habits, expectations and patterns of attention by their very structure. To treat a technology as neutral, in this view, is to ignore the way it quietly scripts behavior long before any conscious choice is made.

What makes this perspective relevant to the EU’s approach is that it refuses to moralize individual weakness while ignoring systemic influence. Rather than blaming users for failing to resist distraction, this line of thought asks how environments are engineered to exploit well-known human tendencies: our susceptibility to reward, our limited attention, our reliance on habit. The European Commission’s concern with “addictive design” reflects a similar realism. It acknowledges that autonomy does not exist in a vacuum, and that freedom is undermined not only by coercion, but by systems that continuously bypass reflection. In this sense, the law is finally catching up to a long-standing insight about what it actually means to be human in a world designed to keep us scrolling.